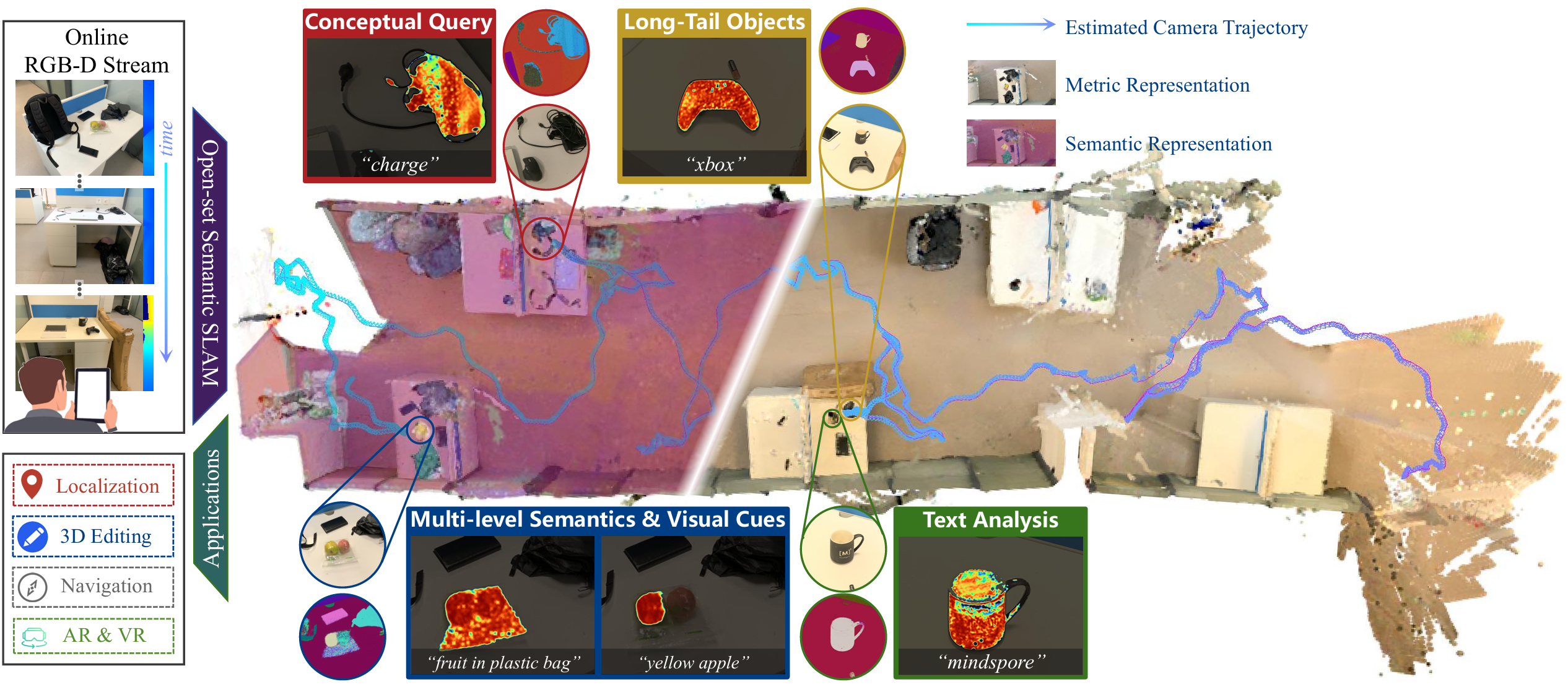

Abstract: This work enables everyday devices, e.g., smartphones, to dynamically capture open-ended 3D scenes with rich, expandable semantics for immersive virtual worlds. While 3DGS and foundation models hold promise for semantic scene understanding, existing solutions suffer from unscalable semantic integration, prohibitive memory costs, and cross-view inconsistency. To respond, we propose Open-Set Semantic Gaussian Splatting SLAM, a GS-SLAM system augmented by an expandable semantic feature pool that decouples condensed scene-level semantics from individual 3D Gaussians. Each Gaussian references semantics via a lightweight indexing vector, reducing memory overhead by orders of magnitude while supporting dynamic updates. Besides, we introduce a consistency-aware optimization strategy alongside a Semantic Stability Guidance mechanism to enhance long-term, cross-view semantic consistency and resolve inconsistencies. Experiments demonstrate that our system achieves high-fidelity rendering with scalable, open-set semantics across both controlled and in-the-wild environments, supporting applications like 3D localization and scene editing. These results mark an initial yet solid step towards high-quality, expressive, and accessible 3D virtual world modeling.

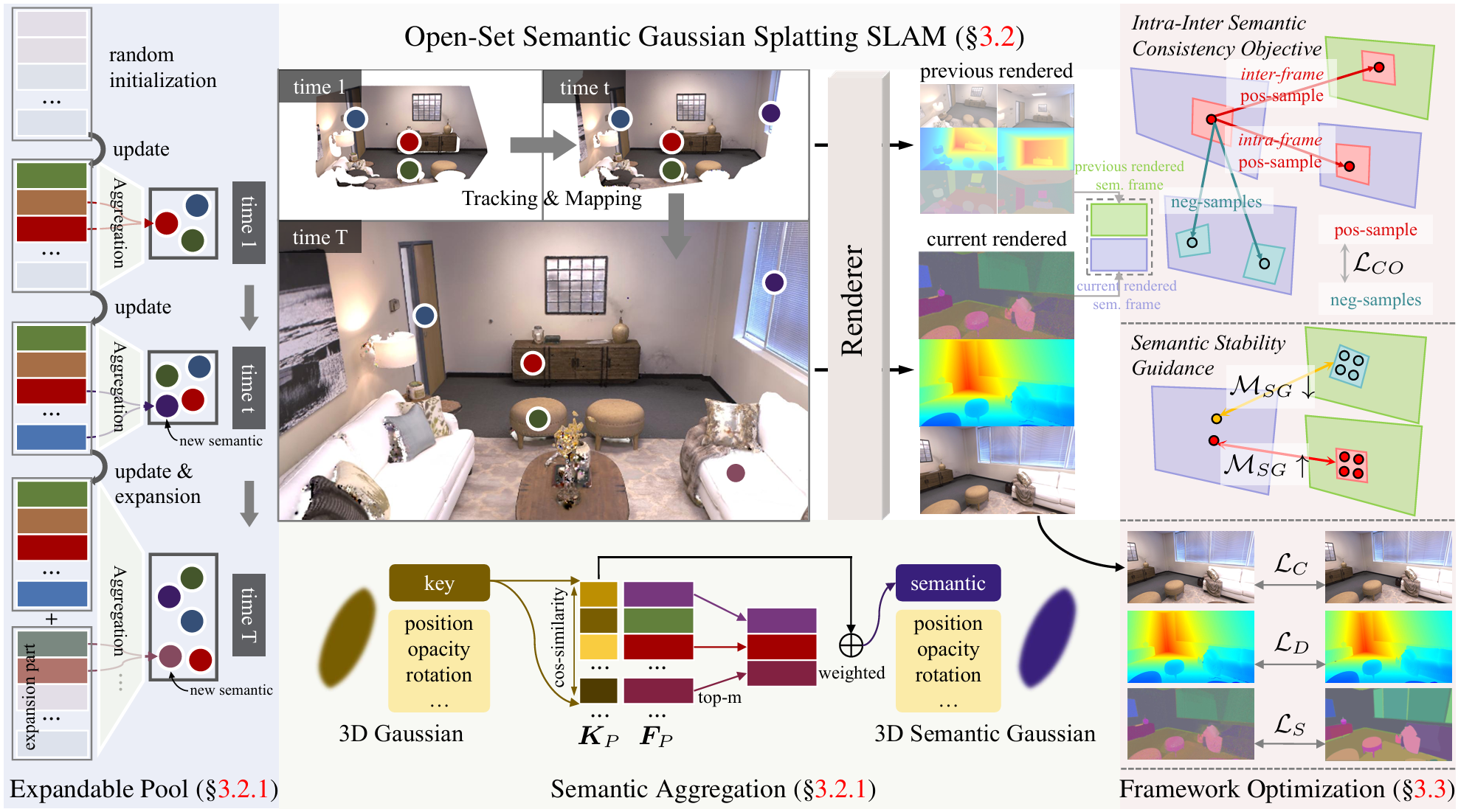

Framework Overview. We enhance existing 3DGS-based SLAM with an expandable semantic representation, introducing a learnable semantic feature pool that stores condensed scene-level semantics and supports dynamic expansion. Each Gaussian retrieves its semantic feature via soft aggregation from the shared pool through a lightweight key. To improve cross-view and temporal consistency, we further introduce an Intra-Inter Semantic Consistency Objective and a Semantic Stability Guidance mechanism, enabling stable and coherent open-set semantic reconstruction during SLAM.

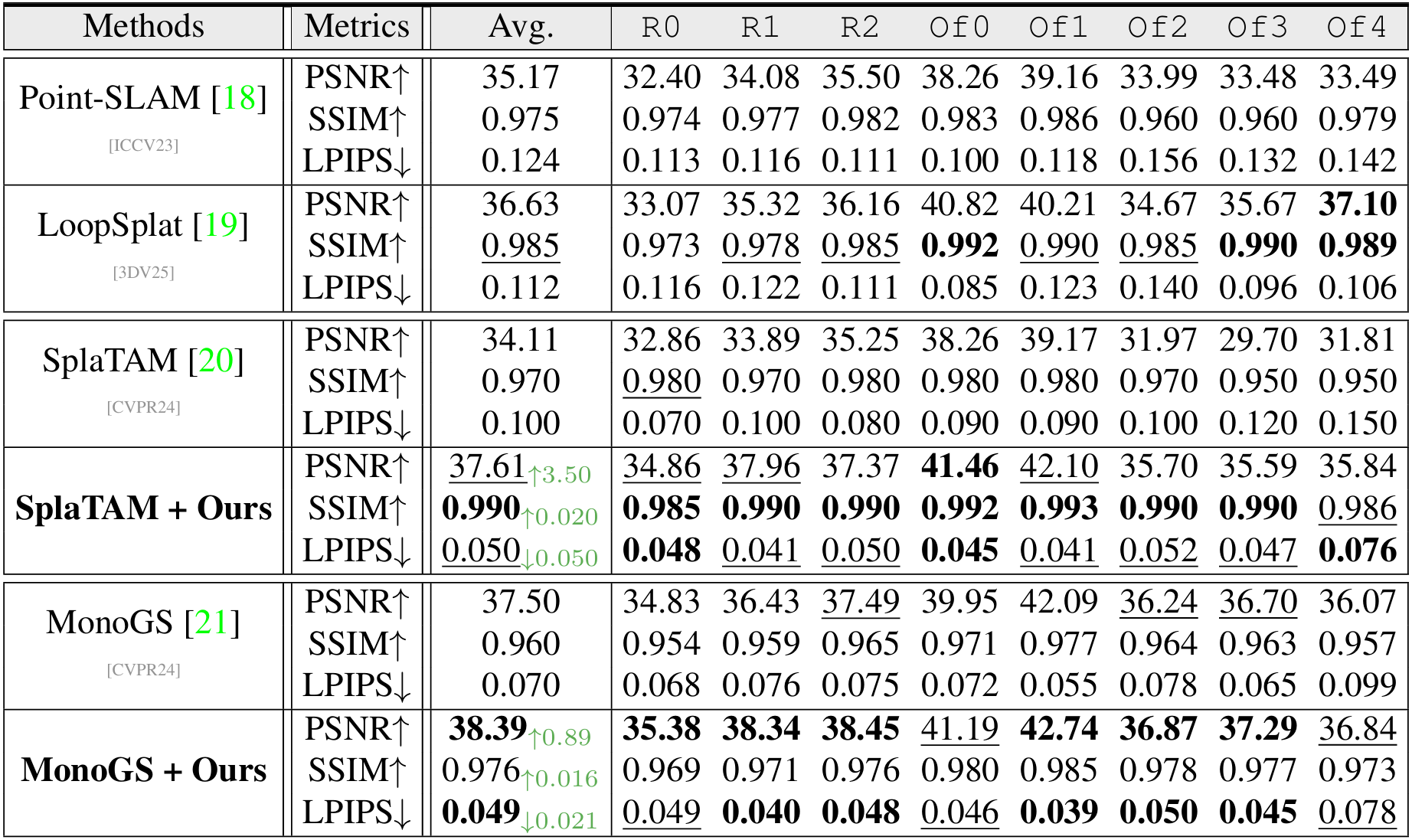

Results on Replica

Rendering Results on Replica

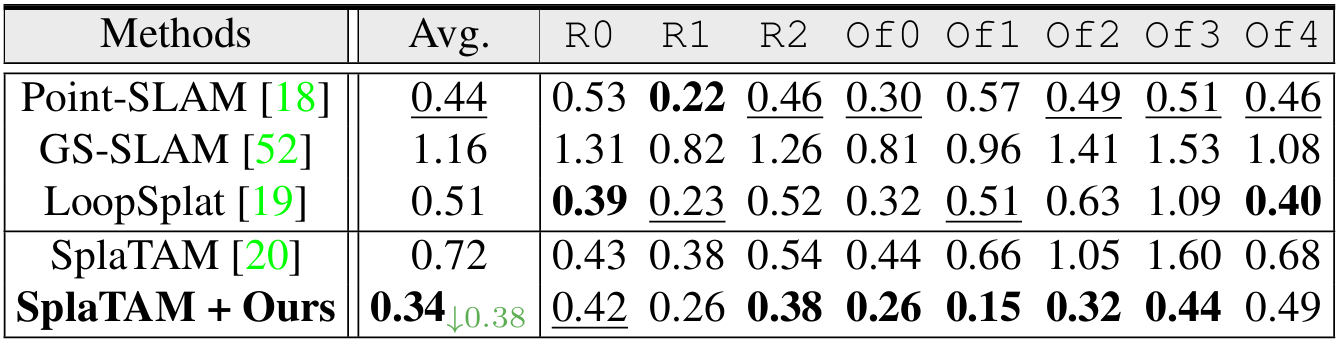

Reconstruction Results on Replica

Rendering Comparisons over SplaTAM on Replica

Rendering Comparisons over Point-SLAM on Replica

Render Comparisons on ScanNet and TUM

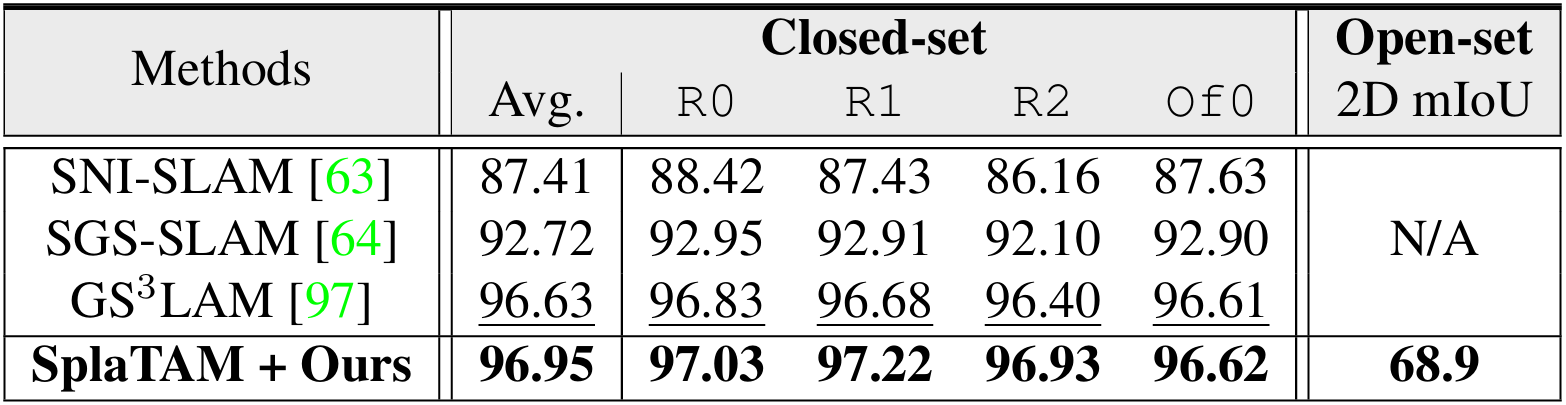

Comparisons with Closed-Set Semantic SLAM

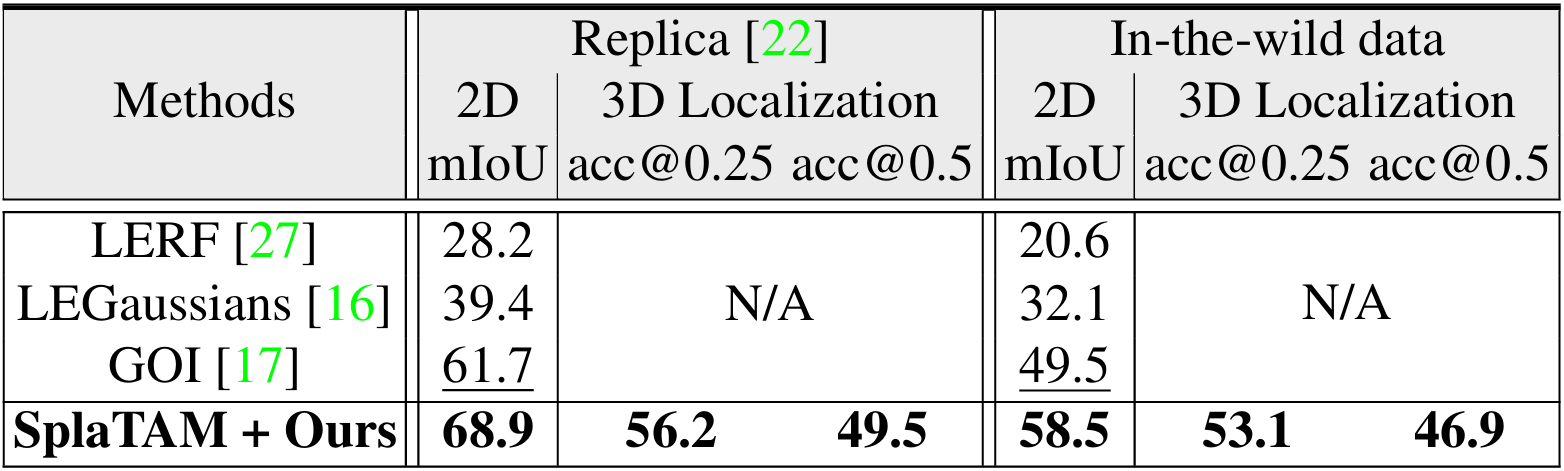

Comparisons with SfM-based Open-Set Semantic Methods

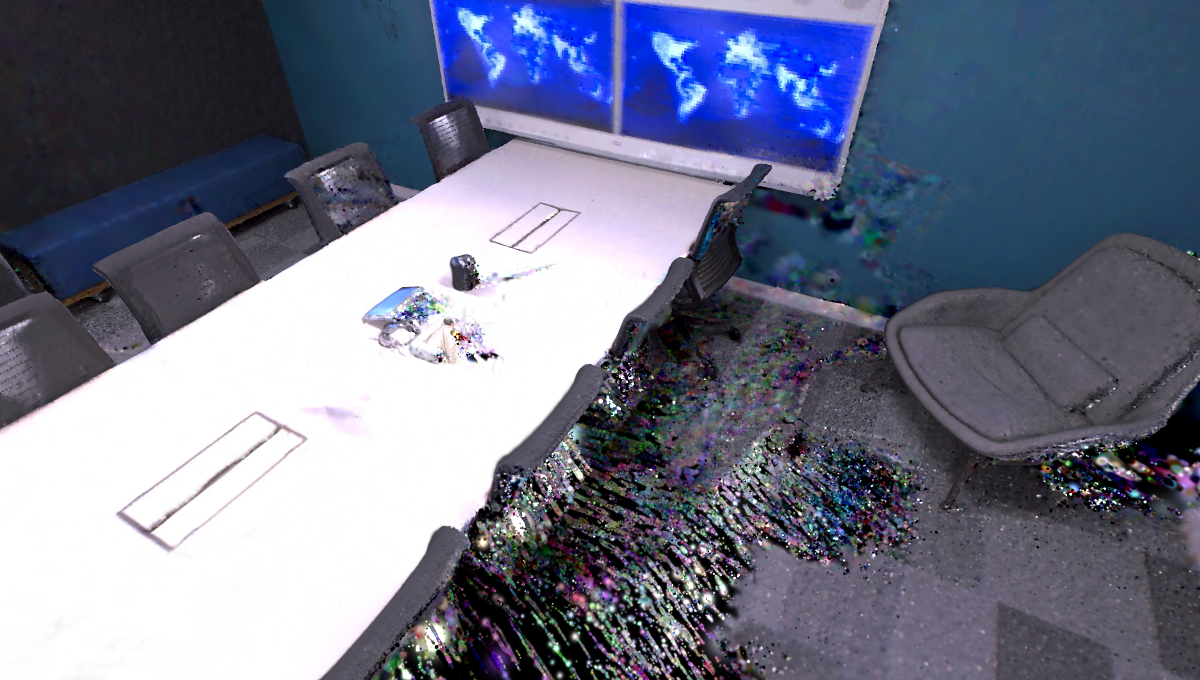

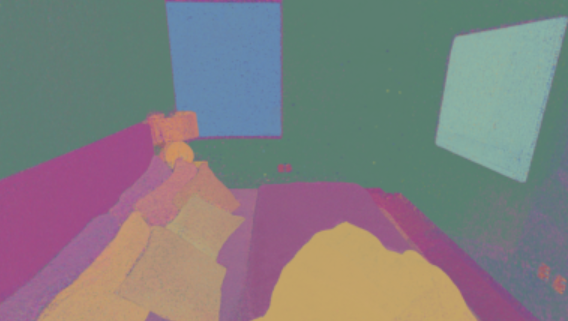

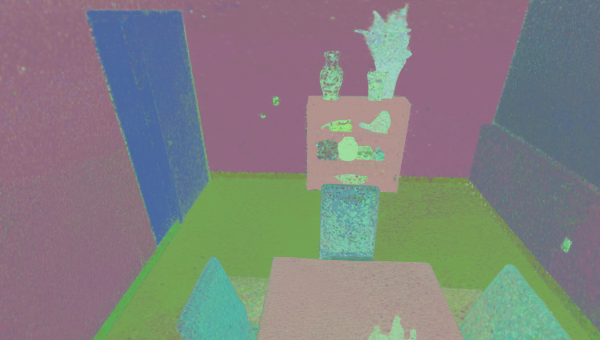

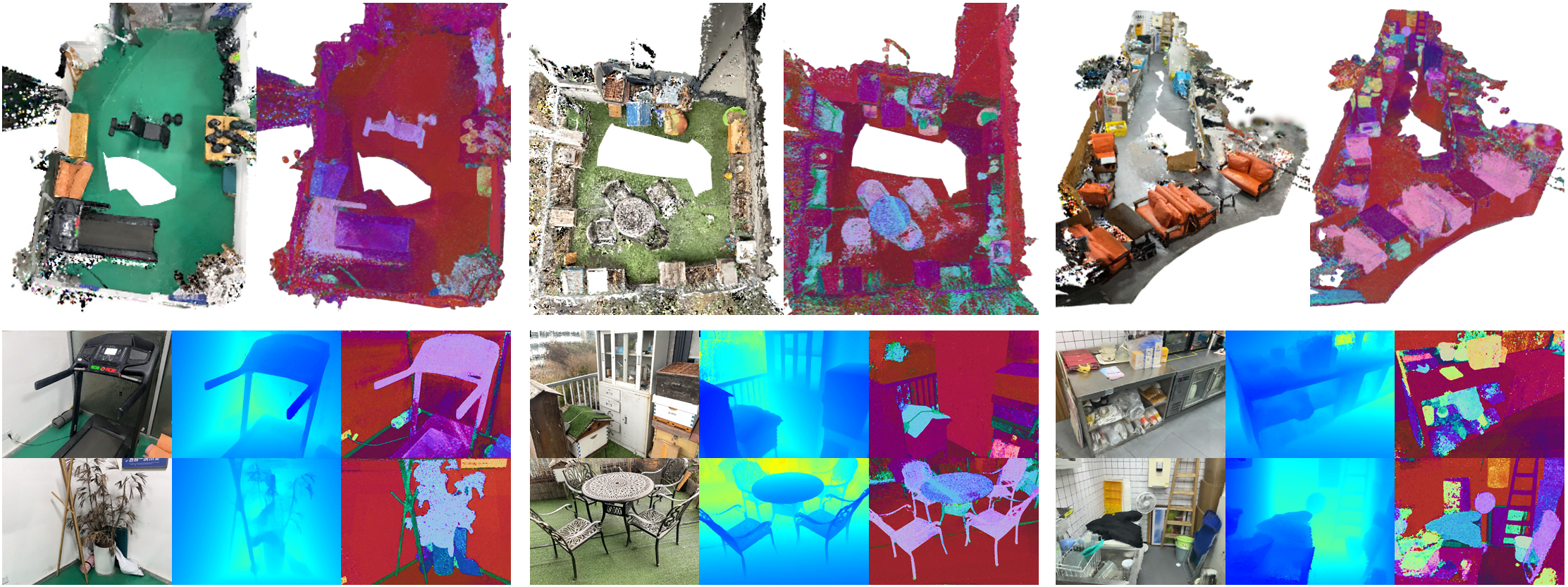

Open-set Semantic Reconstruction Results on Replica

This is a comprehensive visual presentation of Replica room0. The first row shows the full reconstruction results, while the second row displays a heatmap generated from open-set semantic queries to identify objects in the scene.

Editing

Movement & Translation